We use AI tools every day. They shorten the loop from question to evidence — they do not replace judgement, review, or the boring work of defining "done".

If your team has adopted Copilot, Cursor, Claude, or similar, you have already felt the upside: scaffolding, boilerplate, test cases, and exploratory spikes arrive faster than they used to. The risk is not that the tools hallucinate — everyone knows to verify. The risk is that speed feels like progress when the architecture, data model, and operational story are still unsettled.

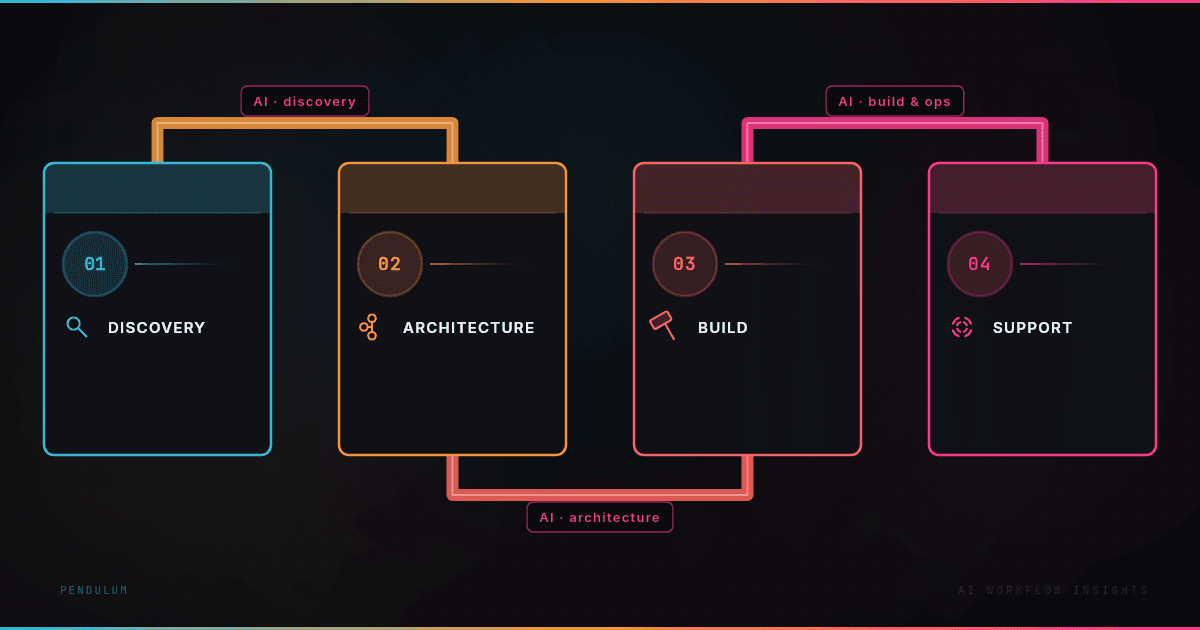

Our development-workflow pillar is simple to state and hard to stick to under pressure:

Use AI to compress exploration, not to skip it.

Keep humans responsible for boundaries — security, data shape, deployment, and what "good" means for the user.

Make quality visible with lightweight habits that survive busy weeks.

Shorter loop from idea to evidence

Before we commit to a data-heavy UI or a new service boundary, we still want a cheap prototype and a short list of failure modes. The tooling changes with the task; the discipline does not.

A useful pattern: time-box a spike, produce something runnable, then ask explicitly: where does this break first under load, bad input, or partial outage? AI is excellent at generating the happy path. Production spends most of its life on the sad path.

One rule we actually enforce

No feature ships without a written "how we will know it worked" check — even if it is a single bullet. It might be a metric, a manual script, a query, or a screenshot of a state change. Sounds small; it saves weeks of debate about whether the last PR counted.

That rule pairs naturally with review: reviewers can ask for the check, not just the code.

Architecture still wins velocity

AI can outpace your ability to name what you are building. When that happens, the fix is not less AI — it is clearer ownership: who decides schema changes, who signs off on auth and session behaviour, who owns the deploy path and rollback.

We are not anti-AI; we are pro-clarity. The teams we help usually need someone to audit structure, tighten the model, and finish the last mile to production — observability, error handling, migrations — without throwing away what already works.

If your stack is moving fast but production still feels fragile, visit https://pendulumdev.co.uk/contact — we will be direct about what is worth fixing first.