AI can build you an app in an afternoon. Getting it to production is a different conversation.

We are seeing this pattern accelerate. A team uses Cursor, Claude, or Copilot to scaffold an entire React application. The demo works. The stakeholders are impressed. Then reality arrives: the state management falls apart at scale, the API layer has no error handling, the database schema cannot support the reporting features they promised for Q3, and nobody thought about auth beyond a TODO comment.

This is not a criticism of AI tooling. We use it every day. It is genuinely transformative for velocity. But velocity without architecture is just technical debt generated faster.

The pattern before AI

Before AI-assisted development became mainstream, we were already inheriting systems built by stretched internal teams. Drupal sites with years of accumulated plugins and no upgrade path. Laravel apps with business logic buried in controllers that nobody dares refactor. React codebases where every component manages its own state and the data flow looks like a bowl of spaghetti.

Projects like Pots and SCONUL taught us what it looks like to walk into an existing system, understand what actually matters, and restructure without breaking what already works. Pots had a booking platform that needed to scale from MVP to a two-sided marketplace supporting group saver packages, referral tracking, and area-based gardener matching. The original architecture could not support any of that. SCONUL had years of institutional data locked behind a Drupal installation that had grown organically without a coherent data strategy.

In both cases, the solution was not to start over. It was to understand the existing system deeply enough to restructure it incrementally — preserving what worked while building the architecture that should have been there from the start.

The same problem, new flavour

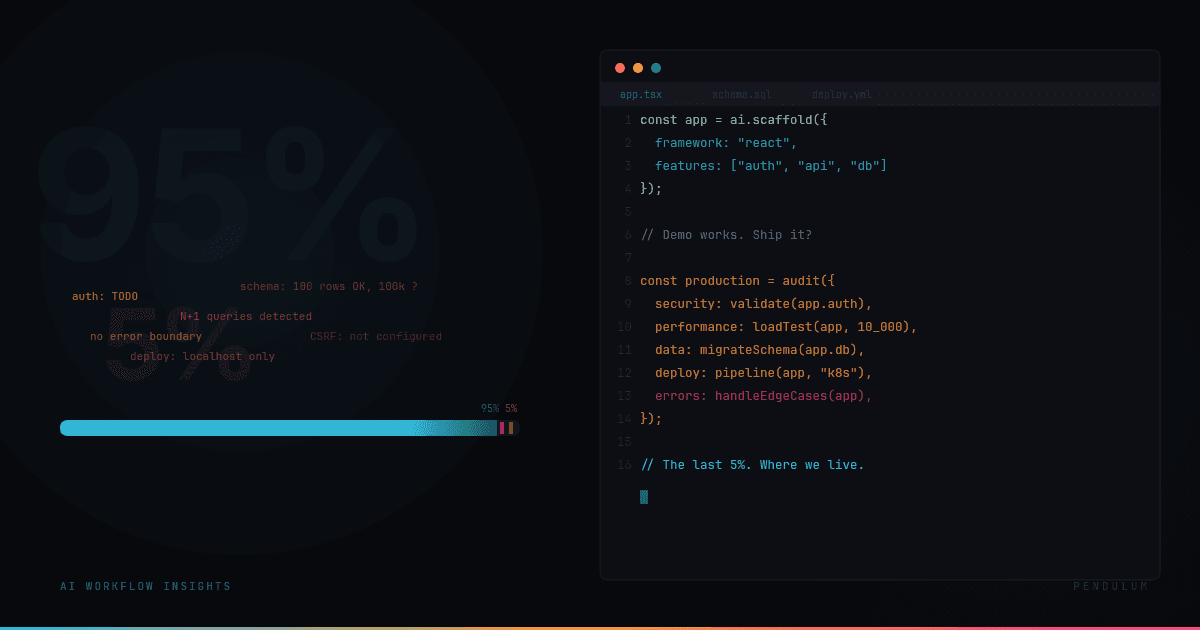

Now the same challenge has a new dimension. Good-intentioned teams — often non-senior developers with genuine domain expertise — use AI to build 95% of something impressive. The UI looks professional. The routes work. The forms submit. It looks like a finished product.

But the last 5% is the hard part. And it is almost always the same 5%:

Performance — The app works fine with 10 users and 100 database rows. At 1,000 concurrent users and 100,000 rows, every N+1 query and unoptimised re-render compounds. AI-generated code tends to be correct but not efficient, because the training data rewards working code, not performant code.

Security — AI will suggest an auth implementation. It might even look reasonable. But it rarely accounts for session invalidation, token rotation, CSRF in server-rendered forms, or the specific OWASP risks relevant to your data model. The gap between "has authentication" and "is secure" is exactly where breaches happen.

Deployment — The code works on localhost. Getting it into a CI/CD pipeline with staging environments, database migrations, environment variable management, and zero-downtime deploys requires infrastructure knowledge that sits outside the application code entirely. AI cannot scaffold your Kubernetes manifests if it does not know your traffic patterns.

Data modelling — This is where AI-generated projects fail most quietly. The schema works for the demo. It does not work for the reporting, the aggregations, the cross-entity queries, or the compliance requirements that arrive six months later. Fixing a data model after launch is the most expensive kind of technical debt.

Error handling — AI-generated code handles the happy path. The sad path — network failures, partial writes, rate limits, third-party API outages, malformed user input at scale — is where production systems spend most of their time. Robust error handling is not a feature. It is the difference between software and a prototype.

Where we come in

We are not here to replace AI-assisted development. We are building with it ourselves, daily. The difference is knowing where AI output ends and engineering begins.

What we do for teams in this situation is consistent: audit the architecture, identify the structural risks, and fix the design problems that were baked in during generation. Handle the deployment, the infrastructure, the observability, and the bits that require someone who has shipped production systems before and knows where they break.

Sometimes that means restructuring the data model before the first real user ever logs in. Sometimes it means adding the error handling, monitoring, and alerting that turns a demo into a product. Sometimes it means honest advice that the AI-generated codebase is the wrong foundation and a targeted rebuild of specific layers will save more time than patching.

The cost of the last 5%

The economics are counterintuitive. AI reduces the cost of the first 95% dramatically. That is real, meaningful progress. But it can also create a false sense of completion that delays the hard work.

The last 5% does not cost 5% of the effort. It is where the majority of production engineering lives. Getting through it requires experience with real systems under real load — the kind of experience that comes from years of shipping, maintaining, and evolving production software.

If you have built something with AI and it is stuck in the gap between demo and production — that is exactly the problem we solve.